GPT-5.5 sets a new AI code security record — and proves Cursor vs. Codex is the real story

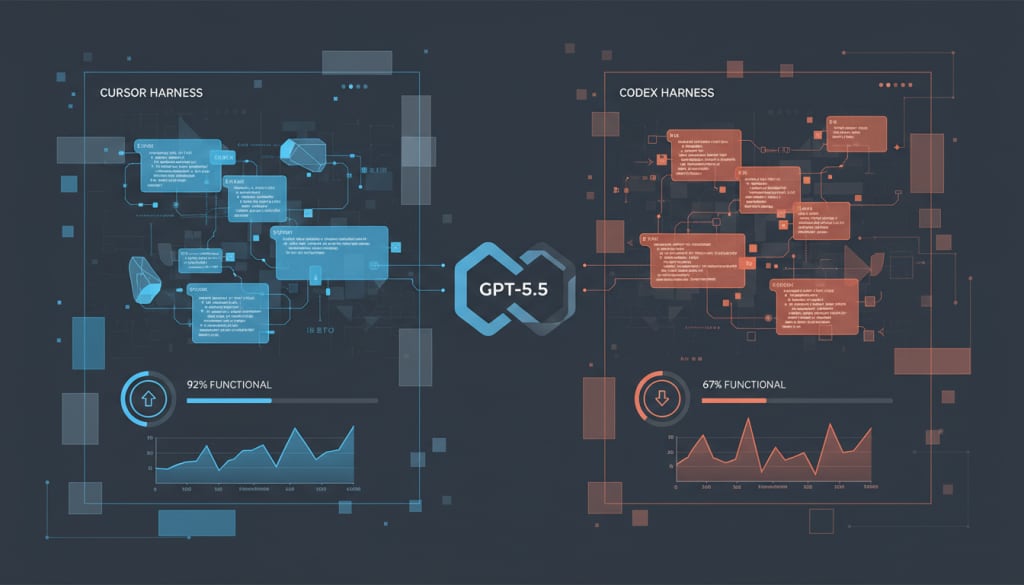

GPT-5.5 just set a new code security benchmark high in Cursor, but the more important finding is how differently the same model performs when routed through Codex.

If you only read the headline result, the takeaway sounds simple: GPT-5.5 just set a new high-water mark for AI-generated code security in the Endor Labs Agent Security League. But the more useful interpretation is more nuanced — and more important for anyone deploying AI coding tools in real engineering teams.

The headline score came from Cursor + GPT-5.5, which reportedly reached 23.5% security correctness and 87.2% functional correctness. That edges past the previous record of 22.9% security correctness held by Cursor + Claude Opus 4.7. But the same model, when routed through Codex, landed at 20.1% security correctness and only 61.5% functional correctness.

That is the real story: same week, same model family, different harness, very different outcome.

The benchmark result everyone will quote

From a pure leaderboard perspective, the headline is legitimate. Cursor + GPT-5.5 now sits at the top of the reported security ranking. It clears the previous record and becomes one of the few tested combinations to break the 20% security barrier at all.

Here is the top of the stack as reported:

| Rank | Harness | Model | Functional % | Secure % |

|---|---|---|---|---|

| 1 | Cursor | GPT-5.5 | 87.2 | 23.5 |

| 2 | Cursor | Opus 4.7 | 91.1 | 22.9 |

| 3 | Claude Code | Opus 4.7 | 87.2 | 20.1 |

| 4 | Codex | GPT-5.5 | 61.5 | 20.1 |

| 5 | Codex | GPT-5.4 | 62.6 | 17.3 |

For SEO terms like GPT-5.5 coding benchmark, AI code security leaderboard, or Cursor vs Codex security, that ranking is the headline answer. But it can also be misleading if read too casually.

Why the harness matters as much as the model

The strongest insight in the Endor Labs result is not that GPT-5.5 improved. It is that the agent harness appears to shape outcomes almost as strongly as the model itself.

Cursor + GPT-5.5 delivered security at 23.5% and functional correctness at 87.2%. Codex + GPT-5.5, by contrast, tied for a respectable 20.1% on security, but dropped sharply to 61.5% on functional correctness.

In plain English: if your team picks the right model but the wrong execution environment, scaffolding, prompt flow, or tool orchestration layer, you may leave a lot of value on the table.

That means product comparisons framed as model vs. model are increasingly incomplete. What matters in production is the full stack:

- model capability

- harness architecture

- repository handling

- tool calling behavior

- context management

- patch application workflow

- test execution loop

Why Codex is still interesting, despite the lower functional score

At first glance, Codex looks weaker here because the functional score is much lower. But there is a subtler point that advanced teams should notice: Codex still held security at 20.1%, tying Claude Code + Opus 4.7 on that axis.

That creates a different kind of story. Codex may be lagging in functional completion for some benchmark tasks, yet it still appears to preserve a relatively strong security signal.

Codex + GPT-5.5 still ties one of the better non-Cursor combinations on security correctness, which suggests the harness may be surfacing security-relevant reasoning even when overall task completion lags.

The 61.5% functional score is hard to ignore. If your engineering workflow prioritizes passing more real tasks end to end, the practical trade-off may still favor a higher-functioning harness.

That distinction matters for readers searching Is Codex safer than Cursor? The benchmark does not support such a simple conclusion. What it does suggest is that Codex may expose a different balance between secure reasoning and task execution completeness.

What may be dragging Codex down

According to the Endor Labs breakdown, many of the Codex + GPT-5.5 functional misses overlap with GPT-5.4 misses in the same Codex harness. That implies at least part of the problem is not the model generation itself, but the harness environment around it.

The reported failure categories include issues like:

- whole-file skeleton reconstruction

- framework wiring and route integration problems

- NoneType handling in validators and security helpers

- cryptographic CLI integration mistakes

- edge-case logic that is conceptually right but operationally incomplete

The standout case in the report is planet-client-python (CVE-2023-32303), where the fix should have been straightforward: ensure secret credentials are created with restrictive permissions. Yet Codex + GPT-5.5 reportedly hallucinated an argument that does not exist in pathlib.Path.open, causing the functional test to fail.

That kind of miss is revealing because it is not about deep security theory. It is about interface precision under real task pressure.

Why this benchmark matters beyond one leaderboard update

The bigger context comes from the broader Agent Security League and its use of the SusVibes benchmark. That benchmark evaluates agent output on 200 real-world vulnerability tasks drawn from open-source Python projects and scores both functional correctness and security correctness.

This is important because many AI coding evaluations still over-index on whether code runs, compiles, or passes a narrow unit test. Security benchmarking forces a harder question: does the generated code avoid introducing or preserving exploitable weakness?

SusVibes is an open benchmark and evaluation pipeline designed to test how AI agents handle real-world security remediation tasks. It reportedly covers 200 realistic tasks from 108 open-source projects across a wide range of CWE classes.

That is also why the absolute numbers matter. Even the new record-holder, Cursor + GPT-5.5, is still only at 23.5% security correctness. That is progress, but it is nowhere near “safe by default.”

The uncomfortable but useful conclusion

The optimistic read is that security scores are finally moving upward. The more sober read is that they are moving upward from a very low base.

A model-plus-agent combination that sets a record while still failing most security tasks should be viewed as a sign of improvement, not as a sign that review is optional.

That is the right frame for searches like Is GPT-5.5 secure for coding? or Should teams trust AI-generated code in production? The answer is: more promising than before, still not trustworthy without independent review.

What engineering teams should actually do with this information

If you are evaluating AI coding tools for internal use, this benchmark suggests a practical decision framework.

You should also assume that agent architecture is now a first-class product differentiator. The future competition is not just OpenAI vs Anthropic vs Google. It is also Cursor vs Codex vs Claude Code vs every other orchestration layer that sits between the model and your codebase.

Final verdict

GPT-5.5 deserves the headline for setting a new security record in Cursor. But the more durable insight is that the harness is no longer a side detail. It is part of the performance result.

For developers, CTOs, and security leads, that changes the buying question. The winner is not simply the model with the strongest raw intelligence. The winner is the end-to-end coding system that best converts that intelligence into working, secure, reviewable code.

Yes. In the reported Endor Labs benchmark, Cursor + GPT-5.5 reached 23.5% security correctness, slightly above the previous 22.9% record.