Why OpenClaw 2026.5.12 Feels Like a Bigger Deal Than a Normal Update

OpenClaw 2026.5.12 is not just another feature drop. It sharpens the runtime boundary around OpenAI agent turns, makes ChatGPT subscription-backed setup more practical, and moves the platform closer to cleaner agent architecture.

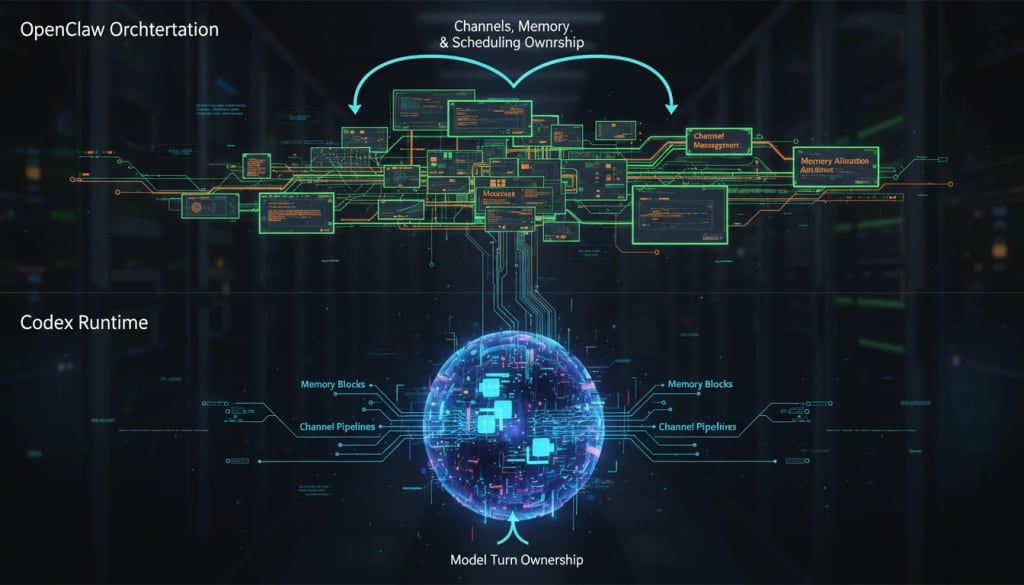

OpenClaw 2026.5.12 matters because it makes the OpenAI agent path feel more native, less improvised, and more operationally coherent. The biggest headline is not just that Codex is supported. It is that the Codex harness is now treated like the serious runtime boundary for OpenAI agent turns, while OpenClaw keeps ownership of the layers around the turn: channels, memory, scheduling, approvals, and delivery.

Key takeaways

- OpenClaw is separating the OpenAI agent-turn runtime from the rest of the agent operating layer more cleanly

openai/*is the canonical model route, while Codex becomes the native runtime path for those agent turns- ChatGPT/Codex subscription-backed auth is now far more practical for OpenClaw operators

- Tool discovery and visible reply behavior both benefit from a less bloated, more intentional runtime design

The architecture shift is the real story

Most people will look at this update and think about convenience first: subscription auth, one login flow, easier setup. That part matters. But the bigger story is architectural.

Before a runtime split gets cleaned up, a platform often ends up translating too much. It has to manage model behavior, tool behavior, reply behavior, state behavior, and execution behavior all through the same orchestration lane. That works for a while, but it creates friction. Every extra translation layer adds one more place for context clutter, tool mismatch, and odd runtime behavior to appear.

With the Codex harness path, OpenClaw is leaning into a clearer division of labor. The Codex runtime can own the low-level OpenAI agent turn, while OpenClaw continues to own what makes the agent usable in the real world: channels, persona, memory, jobs, rules, and system integration.

That is the kind of change users feel even when they never open the docs. The system becomes easier to reason about because the boundaries are cleaner.

Why it matters

This kind of runtime cleanup matters for three reasons.

First, it reduces ambiguity. Operators no longer have to mentally blur together provider, auth method, model ref, and runtime path as one confusing block.

Second, it improves reliability. When the low-level execution lane belongs to the runtime that is actually being built for that model family, there is less pressure on OpenClaw to imitate or translate every detail itself.

Third, it improves operator confidence. If you run multiple agents, care about session isolation, or want fewer weird edge cases around tool use and delivery, cleaner ownership boundaries are not just nice design—they are operational value.

What changed in practice

The official docs now make three practical ideas much clearer than before.

1. openai/* stays canonical

The OpenAI provider docs frame openai/* as the canonical route. That matters because it decouples the public model reference from older compatibility-era naming and transport habits.

2. Subscription auth has a real first-class story

For users who want ChatGPT/Codex subscription-backed agent use, the documented login flow is:

openclaw models auth login --provider openai-codex

That is important because it turns the subscription path into a normal onboarding story instead of a niche workaround.

3. Visible replies become more intentional

The Codex harness docs describe a model where visible replies default toward deliberate message-tool behavior unless automatic delivery is explicitly configured. That is a subtle but powerful UX improvement. Internal execution stays internal more cleanly, while visible replies become a choice instead of a leak.

Before vs after at a glance

| Area | Before this runtime direction | After the Codex harness shift |

|---|---|---|

| OpenAI agent-turn ownership | More translation pressure inside OpenClaw | Clearer native Codex runtime ownership |

| Subscription-backed setup story | Easier to misunderstand or treat as a workaround | Much more first-class and documented |

| Tool loading | More risk of schema clutter and oversized context | Stronger direction toward search/discovery-first behavior |

| Visible replies | Easier to blur internal execution with user-facing output | More intentional separation between internal work and visible replies |

Tool discovery may be the most underrated win

A lot of agent problems are not model-quality problems. They are tool-surface problems. When too much schema gets stuffed into initial context, the model starts with a noisy workspace. Tool discovery becomes guessier, not smarter.

The practical value of a search/discovery-first direction is that it keeps the starting context smaller and the loaded tool knowledge more relevant to the actual task. That is good for costs, but it is even better for tool selection quality.

If that pattern spreads beyond the Codex path, it could end up mattering almost as much as the runtime change itself.

What to check first before you switch

If you are planning to lean into this runtime direction, verify these basics first:

- Is the Codex plugin enabled where you expect it to be enabled?

- Are your main agent model refs already on

openai/*? - Did you authenticate through the intended subscription or fallback path?

- Are your visible-reply expectations aligned with your channel behavior?

- Have you tested one real multi-tool workflow instead of only checking model listing or auth status?

Where the free explanation ends and the paid Loot begins

This article is the high-level read: what changed, why it matters, and why the 2026.5.12 release feels more important than a normal version bump.

If you want the operator-ready version—the setup path, migration checklist, runtime decision matrix, and practical verification flow—the companion paid Loot goes deeper:

That is the piece to use if you are onboarding clients, publishing a tutorial, or migrating your own OpenClaw setup and want fewer avoidable mistakes.

Practical LinkLoot angle

For builders and operators, the real opportunity here is not just to say "OpenClaw supports Codex better now." The opportunity is to build cleaner AI employee setups on top of a more stable runtime split.

That naturally connects to broader workflow design and agent tooling strategy too: /guides/ai-agent-tools

FAQ

No. The docs separate agent turns from other OpenAI surfaces such as images, speech, embeddings, and realtime.

Final takeaway

OpenClaw 2026.5.12 feels important because it is not merely additive. It clarifies what owns the OpenAI agent turn and what owns the rest of the agent system.

That kind of cleanup usually pays off twice: once immediately in setup and behavior, and again later in how quickly the platform can evolve without tripping over its own abstractions.

If you care about AI agents that feel less patched together and more intentionally built, this is the kind of update worth paying attention to.