AI Context Flow hits AppSumo with MCP-ready shared memory for AI tools

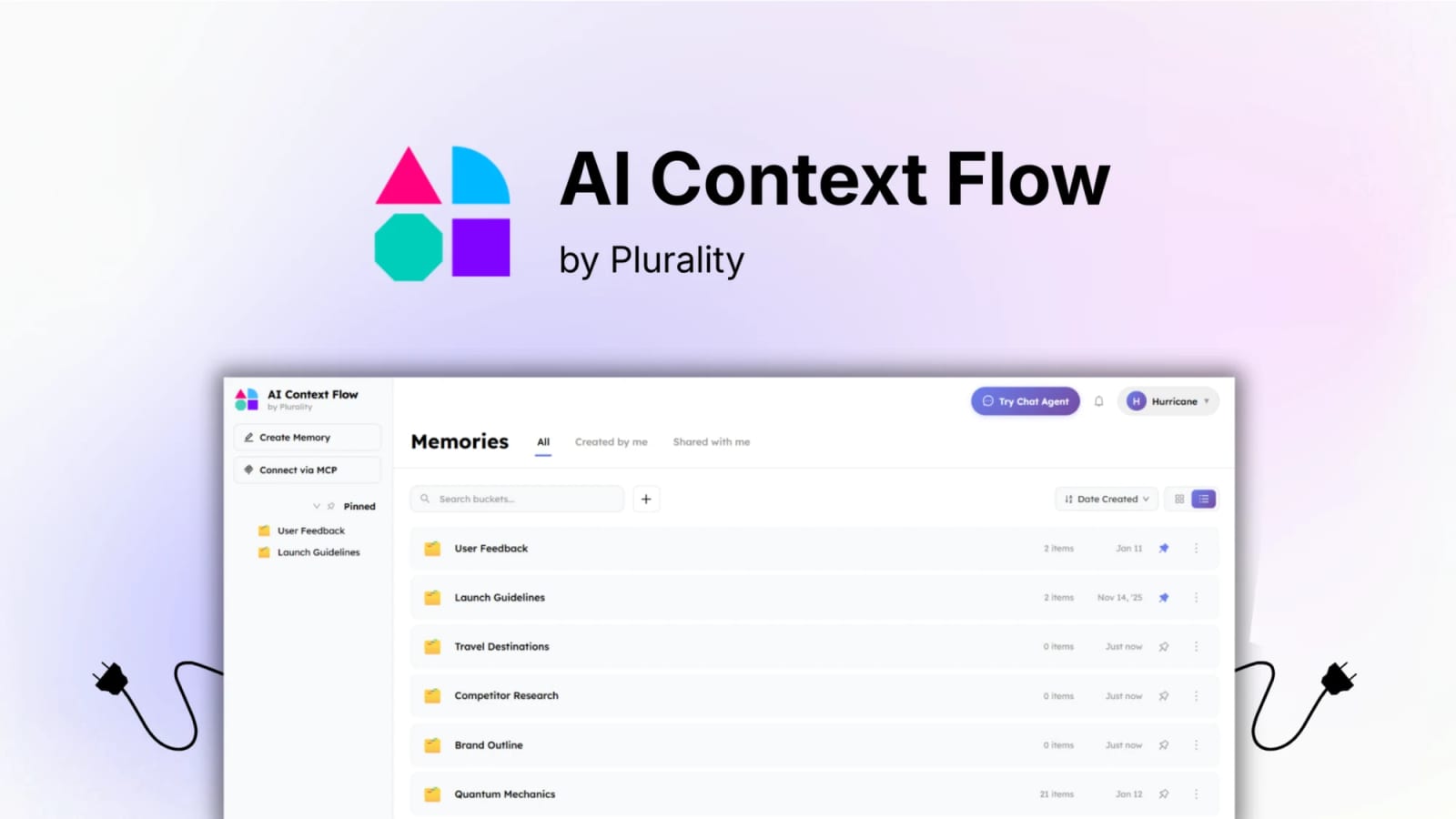

AI Context Flow is now live on AppSumo with a lifetime deal pitch centered on cross-platform AI memory, prompt optimization, and MCP access for tools like Cursor and Claude Code.

AI Context Flow is now being sold on AppSumo as a lifetime deal for users who want one memory layer across ChatGPT, Claude, Gemini, Perplexity, and MCP-compatible tools. The AppSumo listing says the product combines shared memory buckets, one-click prompt optimization, and an MCP server that can connect to tools such as Cursor, Claude Code, and Windsurf. The official product page corroborates the branding and positioning, while the deal page provides the concrete offer terms and feature claims.

Key takeaways

- The main promise is cross-platform AI memory instead of siloed memory inside a single assistant.

- AppSumo positions the offer as lifetime access with Pro tier updates, subject to deal terms.

- The listing highlights MCP access, prompt optimization, memory buckets, and browser-side workflow support.

- This looks most useful for multi-tool users who jump between browser chat, IDE agents, and desktop AI clients.

- The real value depends on whether the MCP and privacy claims hold up in your own workflow.

Practical LinkLoot angle

This is interesting if you already work across multiple AI tools and keep reloading the same context manually. A creator or operator could keep client briefs, brand voice, campaign notes, and workflow rules in separate memory buckets, then reuse them across ChatGPT, Claude, and an MCP-enabled coding assistant without copy-pasting the same setup every session.

| Deal question | What the listing claims | What it means for buyers |

|---|---|---|

| Core function | Universal AI memory across tools | Less repetitive context setup if you switch assistants often |

| Prompt helper | One-click optimization | Helpful for non-experts who want cleaner prompts fast |

| MCP support | Works with MCP-compatible tools | Potentially useful beyond browser chat |

| Access model | Lifetime deal on AppSumo | Attractive only if the product stays actively maintained |

| Privacy pitch | TEE-based privacy and user control | Needs hands-on verification, not blind trust |

A practical use case: an agency could store separate memory buckets per client, then use those buckets in research chats, writing chats, and coding-agent workflows without rebuilding context from scratch every time.

What to verify before you act

Confirm exactly which MCP clients you plan to use and whether they support the current authentication flow cleanly. Also verify what “future AI models” means in the deal terms, because the listing explicitly notes that future model access may be discounted or require an add-on. Finally, inspect how data deletion, export, and access revocation work before committing sensitive client or company context.

Quick comparison

| If you need... | This deal may fit if... |

|---|---|

| Cross-tool memory | You actively use more than one AI assistant or agent client |

| Better prompts fast | You want optimization without manual prompt rewriting |

| IDE + browser continuity | You plan to use the MCP server, not just the extension |

The pitch is that memory travels across multiple AI tools instead of staying locked to one platform.

If you compare AI utility deals regularly, the best internal follow-up is /guides/lifetime-software-deals.

This looks like a strong category fit for people who already run mixed AI workflows, but it is not an automatic buy. The product idea is compelling; the real decision comes down to MCP reliability, privacy confidence, and whether the AppSumo terms stay favorable after the initial purchase.