Voker wants to make AI agent analytics usable beyond trace dashboards

Voker launched as an analytics platform for AI agents, pitching self-serve insights, performance tracking, and business impact views instead of engineer-only trace spelunking.

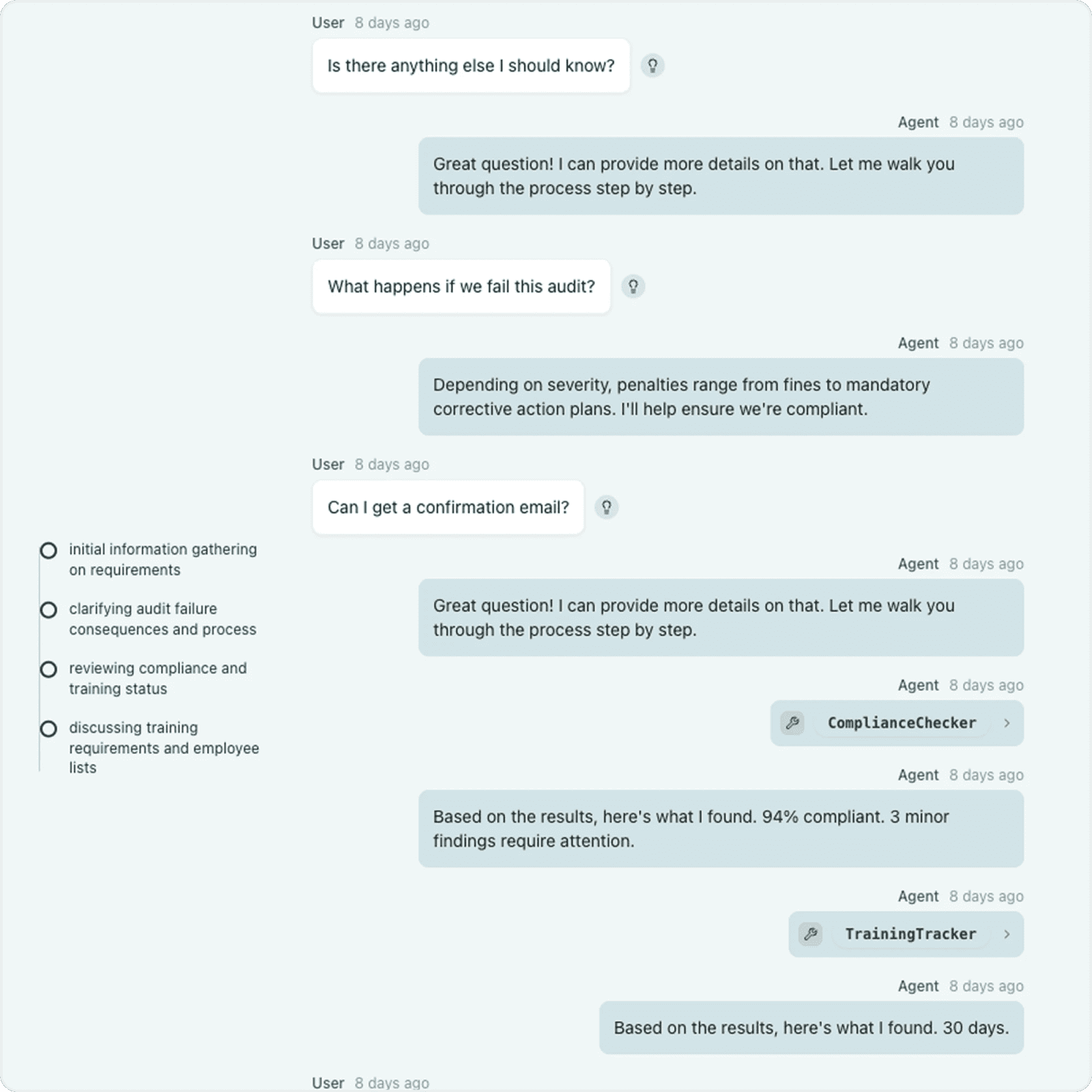

Voker launched as an analytics platform for AI agents that tries to turn messy conversation traces into something product, ops, and growth teams can actually use. The company pitches self-serve analytics, performance intelligence, and business impact reporting for agent workflows, with integrations for common model stacks and frameworks. Y Combinator also describes Voker as an active Summer 2024 company focused on monitoring and improving AI agents.

Key takeaways

- Voker is not selling another raw logging interface; it is selling agent analytics for non-engineers who need answers without SQL or trace deep-dives.

- The official site emphasizes three buckets: self-service analytics, performance intelligence, and business impact.

- The platform targets teams running 1,000+ chat sessions per month and multi-turn agent setups with tools, RAG, or MCP-style workflows.

- Voker says it supports common stacks including OpenAI, Anthropic, Gemini, LangChain, CrewAI, and the Vercel AI SDK.

- The Launch HN framing matters because the product is arriving while many teams have shipping agents but still weak measurement on helpfulness, correction loops, and resolution quality.

Why it matters

Most agent teams can already collect traces. The harder problem is converting those traces into operational decisions: where users get corrected, which intents stall, whether an update improved resolution rates, and whether the whole system moves retention or revenue instead of just token counts. Voker’s pitch is that PMs, analysts, and business stakeholders should be able to query those patterns directly instead of waiting for an engineer to translate log noise into a deck.

That is a real workflow shift if the product holds up. Teams comparing Langfuse or LangSmith style observability with stakeholder-facing reporting should ask whether they need deeper developer debugging, broader business analytics, or both.

| Team need | Voker’s pitch | Likely alternative |

|---|---|---|

| Product and ops self-serve insight | Dashboards around intents, corrections, and resolutions | A BI stack built on exported logs |

| Developer debugging depth | Enough analytics to spot issues and trends | A dedicated tracing platform |

| ROI storytelling | Business impact views tied to outcomes | Manual reporting across analytics tools |

If you are building that stack, LinkLoot’s /guides/ai-workflow-automation is the more relevant internal follow-up than a generic prompt guide.

What to verify before you act

Start with the integration reality, not the marketing layer. Verify how much instrumentation work is still required in your stack, how Voker handles sensitive conversation data, and whether “own your data” plus self-hosting claims match your compliance needs. If your current observability tool already tracks traces well, the make-or-break test is whether Voker gives faster answer loops to product and ops teams without adding another analytics silo.

Also check the volume fit. The company explicitly frames the product around higher interaction counts and cross-functional teams, so a small prototype with a few hundred sessions a month may not feel the same pain that Voker is designed to solve.

AI agent conversations, including intents, corrections, resolutions, and outcome-linked performance trends.